In the race toward artificial intelligence advancement, a shadow looms large over our technological progress: the staggering environmental cost. As AI models grow increasingly complex and integrate deeper into our daily lives, their energy demands are skyrocketing at an unprecedented rate, creating environmental challenges that extend far beyond electricity consumption alone.

The Alarming Growth of AI Energy Consumption

The computing power required for today’s sophisticated AI systems is following an exponential growth curve, with some experts suggesting it doubles approximately every few months. This isn’t a gentle upward trend—it’s a vertical climb threatening to outpace even our most ambitious energy planning.

To put this in perspective, AI’s projected energy requirements could soon consume as much electricity as entire nations like Japan or the Netherlands, or large US states such as California. These comparisons highlight the potential strain AI development could place on global power grids.

The year 2024 witnessed a record 4.3% increase in global electricity demand, with AI expansion playing a significant role alongside growth in electric vehicles and industrial activity. Looking back to 2022, data centers, AI operations, and cryptocurrency mining collectively accounted for nearly 2% of worldwide electricity consumption—approximately 460 terawatt-hours (TWh).

Fast forward to 2024, and data centers alone consume around 415 TWh, roughly 1.5% of global electricity usage, growing at an annual rate of 12%. While AI’s direct share currently remains relatively small at about 20 TWh (0.02% of global energy use), this figure is poised for dramatic growth.

Future Projections Paint a Concerning Picture

The forecasts for AI energy consumption are truly eye-opening:

- By the end of 2025, AI data centers worldwide could demand an additional 10 gigawatts (GW) of power—exceeding the entire power capacity of Utah.

- By 2026, global data center electricity usage could reach 1,000 TWh, comparable to Japan’s current consumption.

- By 2027, the global power demand from AI data centers is expected to hit 68 GW, nearly matching California’s total power capacity in 2022.

As we approach the decade’s end, the numbers become even more staggering. Global data center electricity consumption is predicted to double to approximately 945 TWh by 2030, approaching 3% of worldwide electricity usage.

OPEC estimates suggest data center electricity consumption could even triple to 1,500 TWh by 2030. Goldman Sachs projects global power demand from data centers could surge by up to 165% compared to 2023 levels, with AI-specific data centers experiencing more than a fourfold increase in demand.

Some analysts suggest data centers could account for up to 21% of global energy demand by 2030 when including the energy required to deliver AI services to end-users.

Understanding AI’s Energy Footprint

AI’s energy consumption primarily divides into two major components: training and inference (actual usage).

Training massive models like GPT-4 requires enormous energy inputs. Training GPT-3, for example, reportedly consumed 1,287 megawatt-hours (MWh) of electricity, while GPT-4 is estimated to have required approximately 50 times more energy.

While training is energy-intensive, the day-to-day operation of these trained models can account for over 80% of AI’s total energy consumption. Reports indicate that a single ChatGPT query consumes roughly ten times more energy than a Google search (approximately 2.9 Wh versus 0.3 Wh).

As generative AI adoption accelerates across industries, companies are racing to build increasingly powerful—and consequently more energy-hungry—data centers to support these applications.

The Energy Supply Challenge: Can We Keep Up?

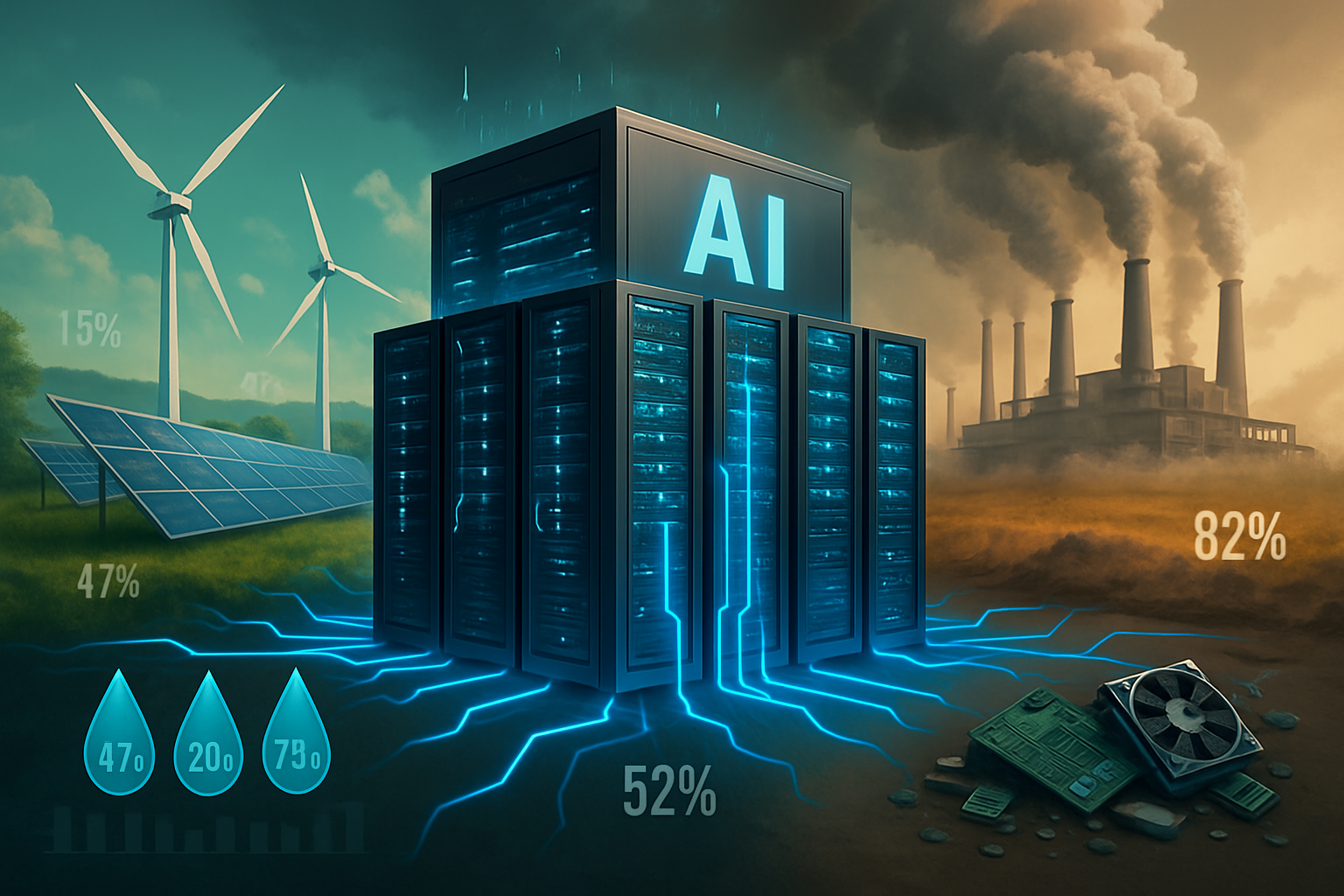

The critical question emerges: can our planet’s energy systems sustain this new demand? Our current energy mix includes fossil fuels, nuclear power, and renewables. Meeting AI’s growing appetite sustainably will require rapid scaling and diversification of energy generation methods.

Renewable energy sources—including solar, wind, hydroelectric, and geothermal—represent a crucial part of the solution. In the United States, renewables are projected to increase from 23% of power generation in 2024 to 27% by 2026.

Tech giants are making significant commitments; Microsoft, for instance, plans to purchase 10.5 GW of renewable energy between 2026 and 2030 specifically for its data centers. Interestingly, AI itself could potentially help optimize renewable energy usage, potentially reducing energy consumption by up to 60% in certain applications through smarter energy storage and grid management.

However, renewables face inherent challenges. Their intermittent nature—the sun doesn’t always shine, and the wind doesn’t always blow—creates reliability issues for data centers requiring continuous, uninterrupted power. Current battery storage solutions to address these fluctuations remain expensive and space-intensive. Additionally, integrating massive new renewable projects into existing power grids involves complex and time-consuming processes.

Nuclear Power: A Viable Alternative?

Given these challenges, nuclear power is gaining renewed attention as a potential solution for meeting AI’s enormous energy demands. Nuclear energy provides the crucial 24/7 power availability that data centers require while maintaining a low carbon footprint. Small Modular Reactors (SMRs) are generating particular interest due to their flexibility and enhanced safety features. Major tech companies including Microsoft, Amazon, and Google are actively exploring nuclear options.

Matt Garman, head of AWS, recently told the BBC that nuclear power represents a “great solution” for data centers, describing it as “an excellent source of zero carbon, 24/7 power.” He emphasized that energy planning is a fundamental aspect of AWS operations: “It’s something we plan many years out. We invest ahead. I think the world is going to have to build new technologies. I believe nuclear is a big part of that, particularly as we look 10 years out.”

Nevertheless, nuclear power isn’t without significant challenges. New reactor construction is notoriously time-consuming, expensive, and subject to complex regulatory requirements. Public perception of nuclear energy remains mixed, often influenced by historical accidents, despite significant safety improvements in modern reactor designs.

The rapid pace of AI development also creates a temporal mismatch with the lengthy timelines required for nuclear plant construction. This discrepancy could lead to increased reliance on fossil fuels in the short term, potentially undermining climate goals. Additionally, concerns exist regarding the impact of co-locating data centers with nuclear facilities on electricity prices and reliability for other consumers.

Beyond Electricity: AI’s Broader Environmental Impact

AI’s environmental footprint extends well beyond electricity consumption. Data centers generate substantial heat, requiring extensive cooling systems that consume vast quantities of water. A typical data center uses approximately 1.7 liters of water for every kilowatt-hour of energy consumed.

In 2022, Google’s data centers reportedly consumed about 5 billion gallons of fresh water—a 20% increase from the previous year. Some estimates suggest that for each kWh a data center uses, it may require up to two liters of water solely for cooling purposes. To contextualize this impact, global AI infrastructure could soon consume six times more water than the entire nation of Denmark.

Electronic waste (e-waste) represents another growing concern. The rapid obsolescence of AI hardware—particularly specialized components like GPUs and TPUs—contributes to accelerated equipment turnover. Projections indicate AI could contribute to annual data center e-waste reaching five million tons by 2030.

The manufacturing process for AI chips and data center components also exacts a significant environmental toll. Production requires mining critical minerals like lithium and cobalt, often using environmentally damaging extraction methods.

Manufacturing a single AI chip can consume over 1,400 liters of water and 3,000 kWh of electricity. The demand for new hardware is also driving expansion of semiconductor fabrication facilities, which frequently leads to construction of additional gas-powered energy plants.

Carbon emissions remain a central concern. When AI is powered by electricity generated from fossil fuels, it contributes to the climate change crisis. Training a single large AI model can reportedly generate as much CO2 as hundreds of American households produce annually.

Environmental reports from major technology companies reflect AI’s growing carbon footprint. Microsoft’s annual emissions increased by approximately 40% between 2020 and 2023, primarily due to data center construction for AI applications. Google reported that its total greenhouse gas emissions have increased by nearly 50% over the past five years, with AI data center power demands being a significant contributor.

Finding a Sustainable Path Forward

As we navigate the AI revolution, finding sustainable solutions to these energy and environmental challenges is imperative. Several approaches show promise:

1. Energy Efficiency Innovations

Developing more energy-efficient AI algorithms and hardware could significantly reduce power consumption. Recent research demonstrates that optimizing AI models can achieve comparable performance with substantially lower energy requirements. Companies like Google and NVIDIA are investing heavily in specialized chips designed specifically for AI workloads that deliver more computational power per watt.

2. Renewable Energy Integration

Strategic placement of data centers in regions with abundant renewable energy resources can maximize sustainable power utilization. Microsoft, Google, and other tech giants are increasingly locating facilities near hydroelectric, solar, and wind power sources. Advanced power purchase agreements (PPAs) are also enabling companies to directly fund new renewable energy projects.

3. Circular Economy Approaches

Extending hardware lifecycles and implementing comprehensive recycling programs can address the e-waste challenge. Some companies are exploring modular data center designs that allow for component upgrades rather than complete system replacements. Recovering valuable materials from decommissioned equipment reduces both waste and the environmental impact of new component manufacturing.

4. Water Conservation Strategies

Implementing advanced cooling technologies like liquid immersion cooling can dramatically reduce water consumption. Some data centers are also exploring the use of non-potable water sources and water recycling systems to minimize freshwater usage.

5. Carbon Offsetting and Removal

While reducing emissions should be the primary goal, carbon offset programs and direct air capture technologies can help mitigate unavoidable emissions. Several tech companies have established carbon-negative goals, committing to remove more carbon from the atmosphere than they emit.

Conclusion: Balancing Progress with Responsibility

The AI revolution promises tremendous benefits across virtually every sector of society, from healthcare and education to climate science and economic productivity. However, these advances must not come at an unsustainable environmental cost.

As we continue developing increasingly powerful AI systems, the technology industry, policymakers, and consumers must collaborate to ensure that our pursuit of artificial intelligence doesn’t undermine the natural systems upon which we all depend. This will require thoughtful regulation, significant investment in sustainable infrastructure, and a commitment to measuring and minimizing AI’s environmental footprint.

The coming years will be critical in determining whether AI becomes a net positive or negative force for environmental sustainability. By acknowledging the challenges now and implementing forward-thinking solutions, we can harness AI’s transformative potential while preserving our planet for future generations.

The path forward requires not just technological innovation but also a fundamental rethinking of how we value and measure progress. Perhaps the ultimate test of artificial intelligence will be whether it helps us become more intelligent about our relationship with the natural world.